A growing C# codebase tells you when it has outgrown its shape. Pull requests start to overlap. Merge conflicts surface in places that have nothing to do with each other. A small change to a price rule breaks a test in the audit log. For many teams, the answer is a modular monolith.

The first reaction is to draw up a microservices plan. The better one is to step back and ask why the boundaries inside the existing code are not holding up.

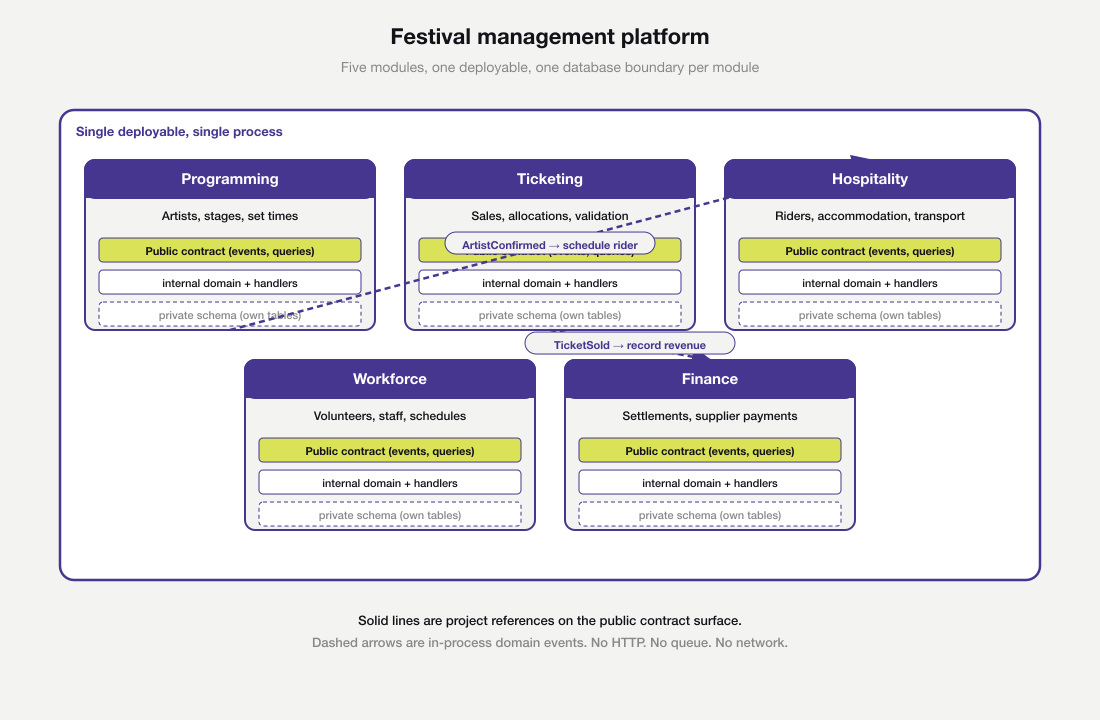

A modular monolith is one app, one process, one deploy, split inside into modules. Those modules talk to each other on small, deliberate contracts. It runs as a single process, but it is designed as if it were a distributed system. Project layout is not the hard part. Drawing the boundaries is.

This post walks through how to design a modular monolith in C# from first principles, and how to find the right boundaries to draw in the first place. The worked example is a music festival management platform. The patterns that erode modularity over time get the same attention as the patterns that establish it.

What a modular monolith is, and what it isn’t

A modular monolith is a single deployable, single process application whose internal structure is divided into modules with private state and public contracts. Each module owns its own data. Each module exposes a thin surface that other modules use. Inside a module, the team can pick whatever internal style suits the work, from layered code to vertical slices. Outside, the boundary is fixed.

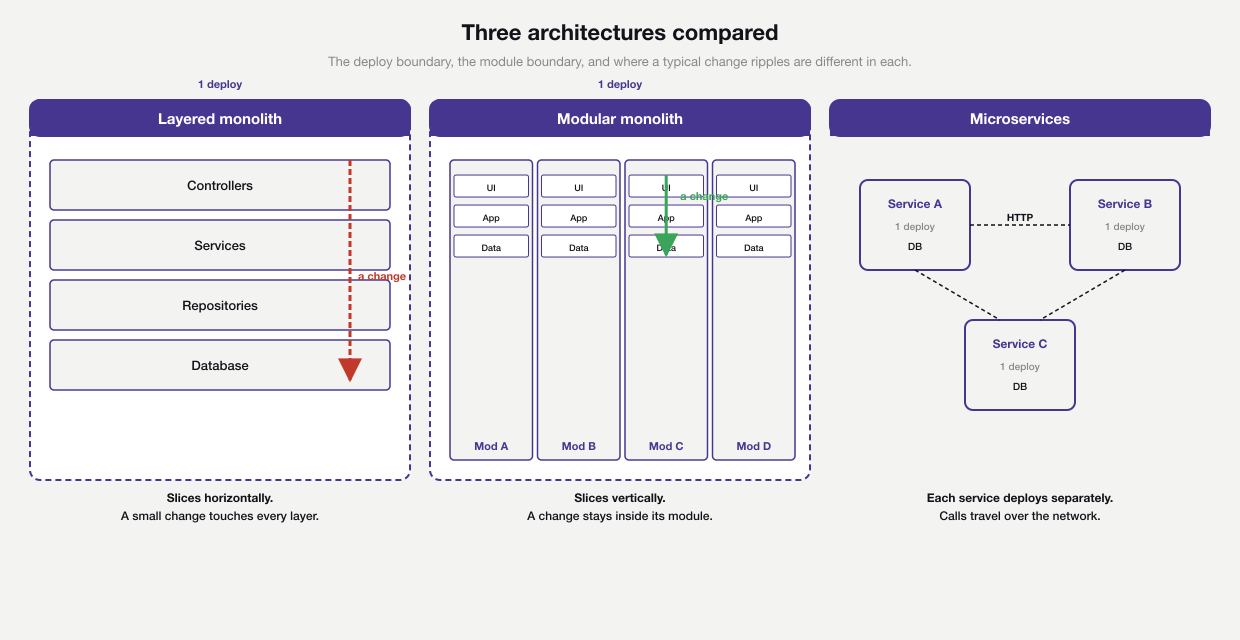

A modular monolith borrows from both ends of the spectrum. From microservices on the right, it borrows real module boundaries that hold up under team-scale pressure. From the layered stack on the left, it borrows the simplicity of one process and one deploy. The middle column is the trade-off in picture form. A feature change stays inside one module, paid for by the discipline of keeping module boundaries real without a network tax.

It is not a layered monolith. A layered monolith slices the system horizontally (controllers, services, repositories), so a small business change still touches every layer. Modules slice vertically along business capability. A change to ticketing stays in the ticketing module.

A modular monolith is also not a hidden distributed system. There is no internal HTTP. No message broker sits between modules unless someone adds one on purpose. Cross-module calls are method calls. Cross-module facts travel as in-process domain events.

The pattern is closely related to hexagonal architecture, but at a different scale. Hexagonal architecture sits inside one module. The modular monolith composes those modules into a system. The two work well together. They are not in tension.

The four lenses for modular monolith design

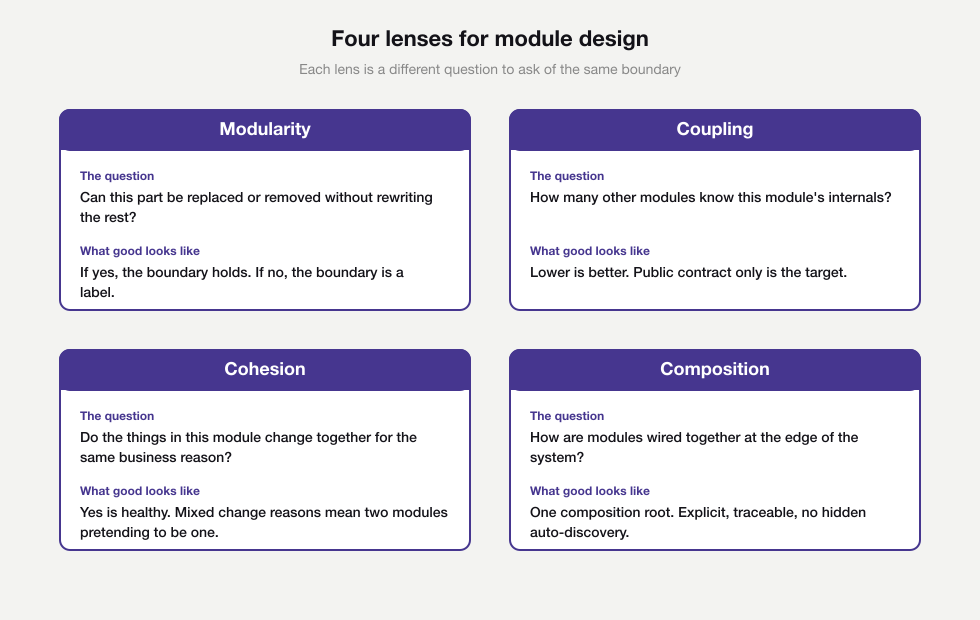

Module boundaries in a modular monolith are easy to draw on a whiteboard and hard to keep in code. Four lenses help. Each one asks a different question of the same proposed boundary. A boundary that holds up under all four is worth committing to. A boundary that fails one is a sign the line has been drawn in the wrong place.

Modularity

Modularity is the property of being separable. The question is simple. Could this part of the system be replaced, removed or rewritten without rewriting the rest? If the answer is yes, the boundary is real. If not, the boundary is a label on a folder.

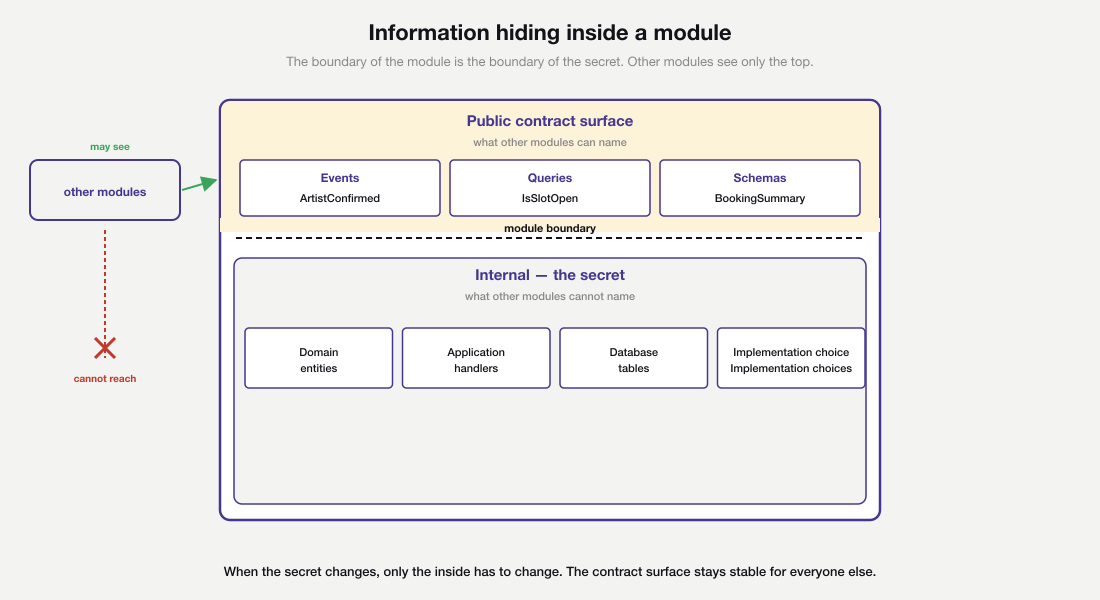

David Parnas gave the practical definition in 1972 in his paper on decomposing systems into modules. A module hides a design decision that is likely to change. The boundary of the module is the boundary of the secret.

The asymmetry in the diagram is the point. Inside the module, a change costs nothing outside, because nothing outside knows the inside exists. The contract surface on top is the opposite. Changes there cost every consumer, because the surface is what every consumer is named to. Healthy modules in a modular monolith push as much weight as possible below the line, where changes stay cheap.

A car dashboard illustrates the same idea. Drivers do not need to know how the engine manages combustion in order to drive. The bonnet hides that secret. When an engineer changes how the engine works, drivers are unaffected, because the surface they depend on (the steering wheel, the pedals, the dashboard) has not moved.

Code on the inside knows the secret. Code on the outside does not, and does not need to. When the secret changes, only the inside has to change.

This is a design property, not a runtime property. It does not require that modules ever actually be replaced. It requires only that they could be, with a known and finite cost. When this property erodes, every change ripples wider than it should.

Coupling

Coupling measures how much one module knows about another. Two modules are loosely coupled when one can change its insides without forcing changes in the other. They are tightly coupled when even a private rename spreads across pull requests in other teams.

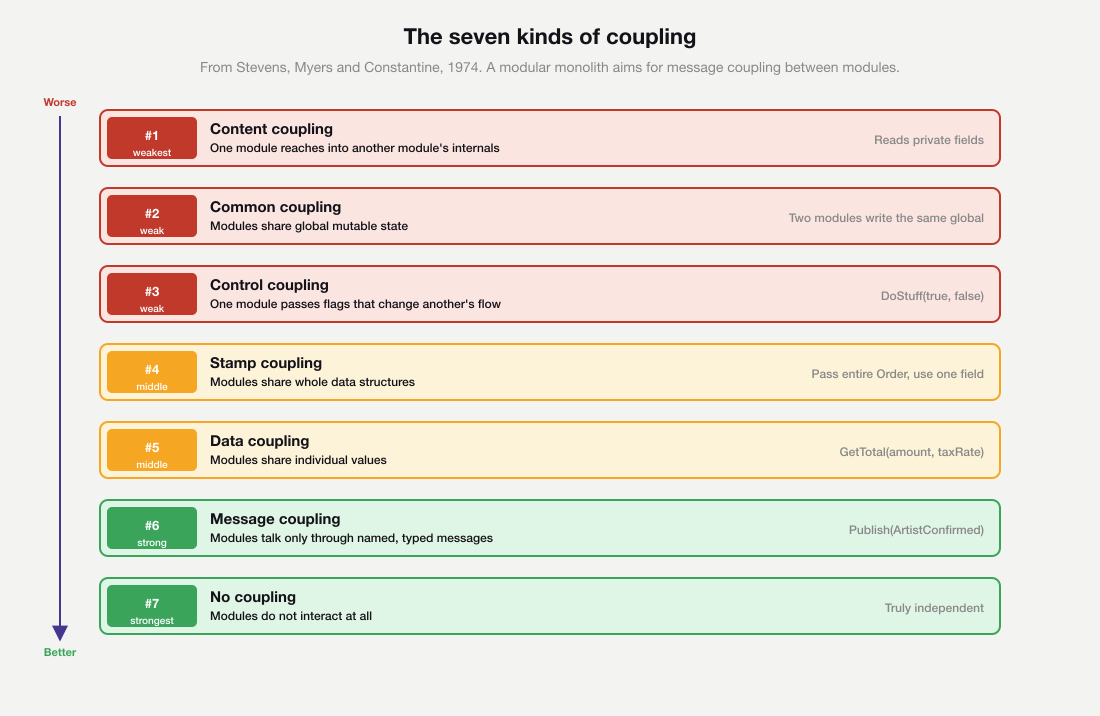

Stevens, Myers and Constantine described seven kinds of coupling in 1974, and the order still holds today. From worst to best, they are content (one module reaches into another’s internals), common (modules share global state), control (one module passes flags that change another’s flow), stamp (modules share whole data structures), data (modules share individual values), message (modules talk only through named, well-typed messages) and no coupling at all.

A useful way to picture the ladder is to think of three flats in the same building. Content coupling is one flat reading another flat’s diary. Common coupling is the two flats sharing a fridge whose contents nobody quite controls. Message coupling is the two flats sending each other letters. The letters are typed, named and addressed, and either flat can redecorate without disturbing the other.

A modular monolith aims for message coupling between its modules. The public contract is the only surface other modules know about. Internal types are unreachable from outside the module. Database tables are not shared. Every cross-module dependency in a modular monolith runs through a named, deliberate seam.

Cohesion

Cohesion measures whether the things inside a module belong together. The honest test is to ask whether the contents tend to change for the same business reason. A module that holds artist bookings and ticket pricing fails this test. The two evolve at different cadences for different stakeholders. They will pull the module in opposite directions.

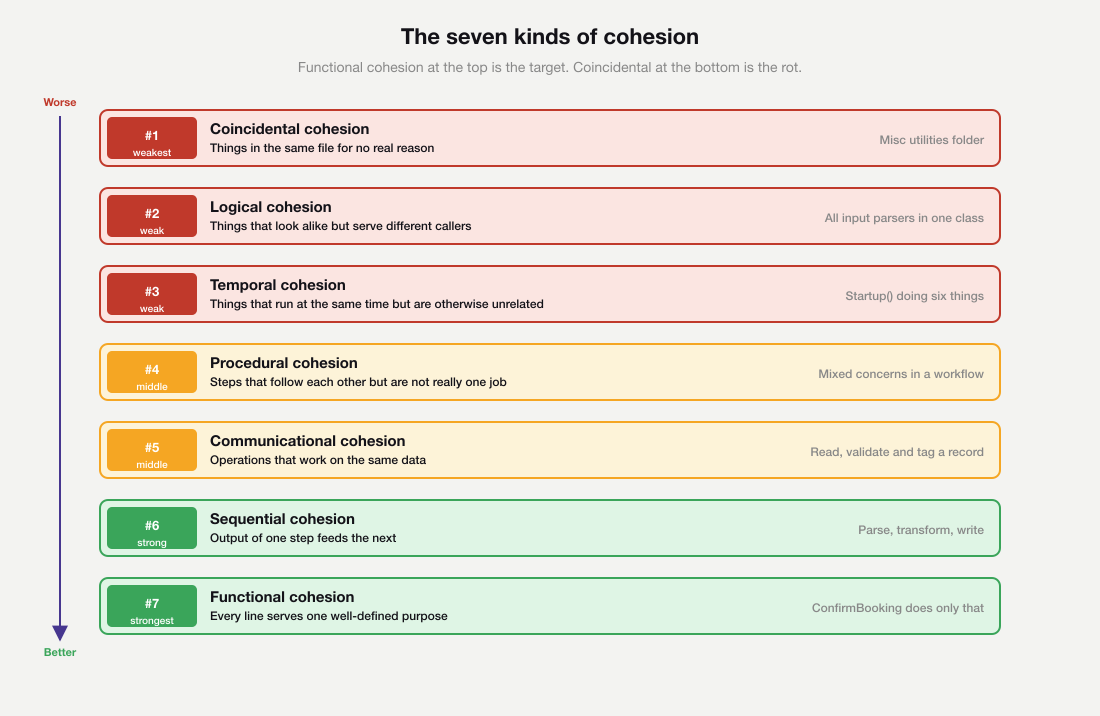

The classic taxonomy ranks cohesion from coincidental at the weakest end (things that happen to be in the same file), through logical (things that look alike but serve different callers), temporal (things that run at the same time but for unrelated reasons), procedural (steps that follow each other but are not really one job), communicational (operations on the same data), sequential (output of one step feeds the next), to functional cohesion at the top, where every line serves one well-defined purpose.

A kitchen brigade is a useful picture. Coincidental cohesion is a desk drawer of unrelated stationery, a stapler next to a battery next to a postcard. Communicational cohesion is three cooks working on the same plate, each contributing different parts of the same dish. Functional cohesion is one cook making one dish from start to finish, where every motion serves the same outcome.

Functional cohesion is what you want. High cohesion lets the module be reasoned about as a single unit. Low cohesion produces files that share a folder but nothing else. Folder structure is a symptom; the underlying cause is a missing boundary.

Composition

Composition is how the modules wire together at the edge of the system. Modules in a modular monolith do not assemble themselves. One file, the composition root, registers each module’s services and wires up the cross-module event handlers. It is the only file that knows the names of every module at once.

Mark Seemann’s pattern of a single composition root is the useful frame here. Object graphs are constructed in one place, near the entry point, with explicit calls. There is no auto-wiring that scans assemblies at startup, because that kind of magic hides who depends on what. The composition root is short, ugly and honest. It is the price of keeping the rest of the system clean.

Explicit composition is what keeps the system honest. It also makes module ownership easy to audit. If you cannot read the composition root in five minutes and understand how the modules fit together, the architecture has drifted.

Approaches for finding good module boundaries

The lenses tell you whether a boundary is good once you have drawn it. They do not tell you where to draw it in the first place. Drawing module boundaries is the harder problem, and a more subjective one.

No algorithm produces correct modules from a domain. No rule of thumb works in every codebase. Every team that succeeds with a modular monolith arrives at their boundaries through a combination of techniques, applied with humility, and revisited as their understanding deepens.

It is worth saying plainly. Modular monolith design is context-dependent. What works for a payments platform with strict regulatory boundaries does not work for a CMS where the natural seams follow editorial workflow. Avoid dogma. The right number of modules is whatever the problem actually has, which you will not know on day one.

Five techniques cover most of the useful ground for discovering modular monolith boundaries in 2026. They approach the problem from different angles, and they are at their most useful when used together rather than in isolation. None of them is a substitute for spending time with the people who use the system.

Event Storming

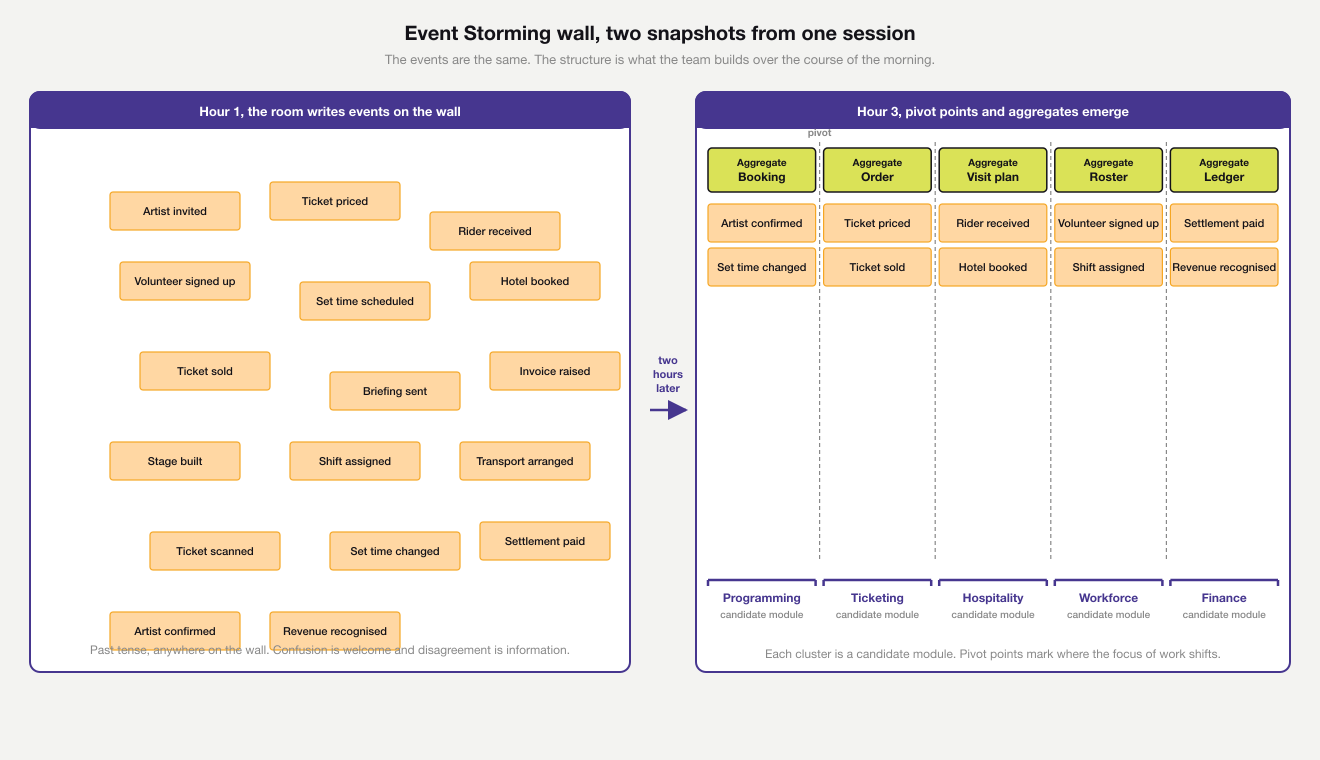

Event Storming, originated by Alberto Brandolini, is a collaborative modelling technique for surfacing the events that matter in a domain. A wide wall, a long roll of paper and a lot of orange sticky notes are the only tools. Domain events go on the orange notes, in past tense (‘booking confirmed’, ‘ticket scanned’, ‘payment received’). Commands, aggregates and policies join later, in their own colours, as the picture sharpens.

A session moves through three stages. The first hour is divergent. Stakeholders write events anywhere on the wall, in past tense, and disagreement is information. The next stretch pushes the events into rough chronology, where pivot points appear. A pivot point is a moment where the focus of work shifts from one type of work to another.

The final stretch names the aggregates, the units that protect invariants for related events. A Booking aggregate handles ‘artist confirmed’ and ‘set time changed’. A Roster aggregate handles ‘volunteer signed up’ and ‘shift assigned’. Where several events cluster around shared aggregates, the team has a candidate module.

The left side of the diagram is hour one, a scatter of past-tense events. The right side is hour three, with pivot points and aggregate cards in place and brackets showing where each candidate module begins and ends. Modules are discovered during the session, not designed before it.

Three flavours exist. Big-picture Event Storming maps the whole domain at low resolution, suitable for a half-day workshop with people across the business. Process-level Event Storming zooms into one workflow. Software-design Event Storming goes deep enough to suggest aggregates and module boundaries directly.

Event Storming is at its best when the domain has many stakeholders and the team is unsure where the natural seams lie. It is less useful when the domain is tiny or already deeply understood.

Value stream mapping

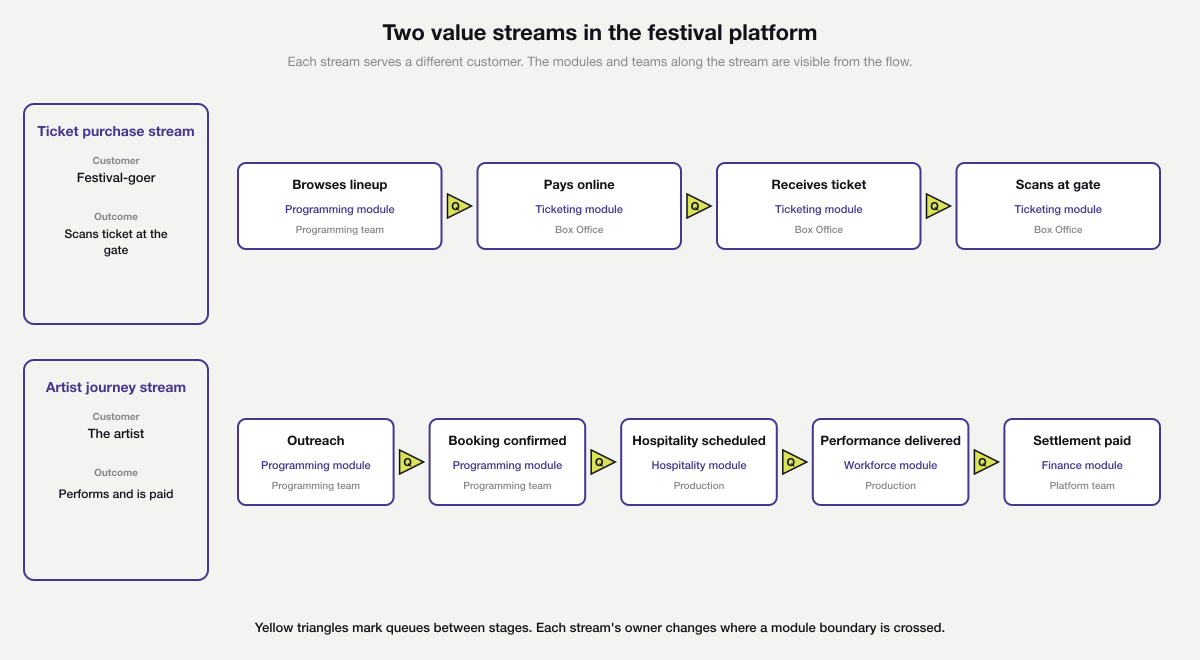

Value stream mapping comes from Lean. It traces the flow of value from a triggering event (a customer requests something) to the moment value is delivered. Each step shows the work, the time it takes, the queue before it and who owns it. The result is a candid picture of where work waits and where handoffs happen.

Picture a parcel moving through a sorting depot. Trace its path: where it lands, where it queues, where hands change, where it gets stuck. The blocked sections and the standing pools are visible immediately. Value stream mapping does the same for work.

For module design, the technique reveals where teams currently stop and start. Stages owned by different teams with little communication between them are usually good places to draw a module boundary. Stages where a single team does many things at once suggest a single module that may need to be subdivided later.

The diagram traces two festival value streams as horizontal swim lanes. Above is the ticket purchase stream, owned almost entirely by Box Office in the Ticketing module, with one upstream handoff from the Programming team where the lineup is published. Below is the artist journey stream, which crosses four modules and three teams along the way. Yellow triangles between stages mark queues, the places where work in a modular monolith waits.

Value stream mapping is most valuable for an existing system where flow problems are visible and team boundaries are established. It is less helpful on a greenfield product, where there is no flow to map yet.

Business capability mapping

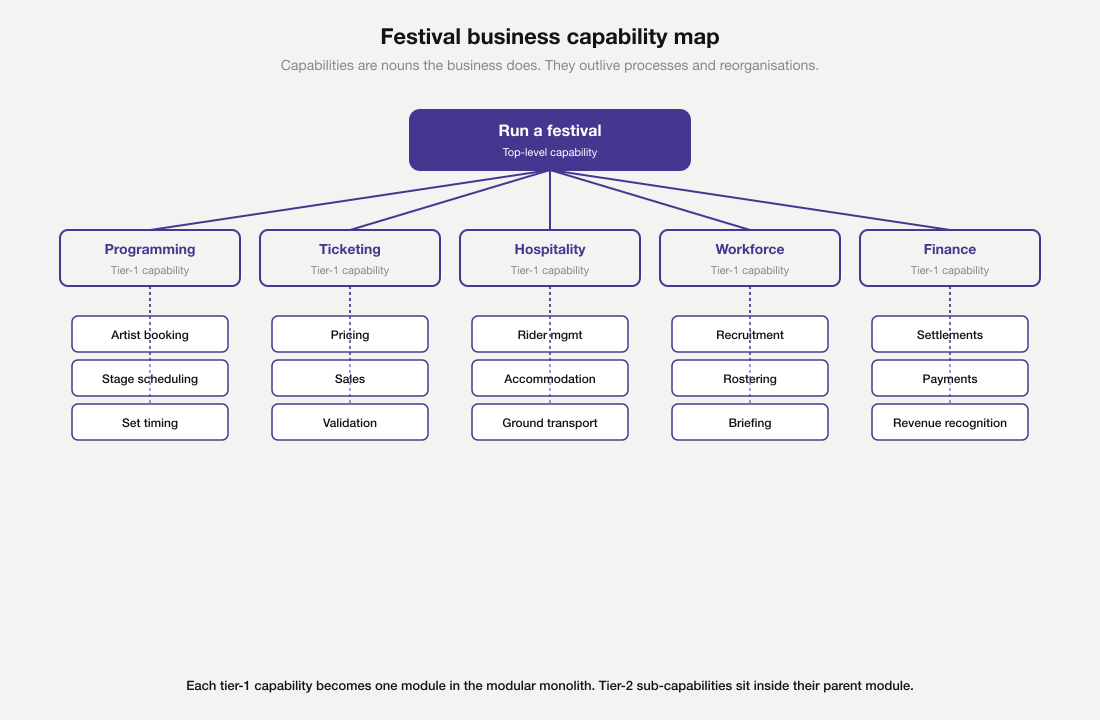

Business capability mapping comes from enterprise architecture. A capability is what the business does, phrased as a noun. ‘Ticket sales’, ‘artist hospitality’ and ‘financial settlement’ are capabilities. Capabilities compose hierarchically. The festival has hospitality, which contains travel, accommodation and dietary management.

Think of a building. Capabilities are the steel frame. Processes are the walls, which can be moved every few years. Tools are the furniture, which gets replaced every season. An architecture anchored to the steel frame outlives the wallpaper.

Capabilities are deliberately stable. Processes change. Organisations restructure. Tools rotate. Capabilities are the stable abstraction underneath all of those changes.

A module anchored to a capability has a long shelf life. A module anchored to a current process or a current team has a shelf life of about eighteen months.

Capability mapping pairs well with Event Storming. The events surface the dynamic behaviour. The capabilities surface the static structure. When the two agree, the boundary is well-supported. When they disagree, you have a question to ask the business.

User needs mapping

User needs mapping flips the perspective. Instead of asking what the business does, it asks what users are trying to achieve. The Jobs-to-be-Done framing helps here, but any honest user research counts. List the jobs, the contexts in which they arise, and the outcomes the user is hoping for.

Modules anchored to user needs tend to stay coherent because users’ jobs change slowly even when the business reorganises around them. A module called ‘ticket purchase’ might span several internal teams, but it survives every internal restructure because the user need does not move.

User needs mapping is most useful when the system is consumer-facing and the internal organisation has churned a few times. It is less useful for back-office systems with no clear external user.

Change-coupling analysis

Change-coupling analysis looks at the existing codebase rather than the domain. The git log records which files have changed together over time. Files that are modified in the same commits, again and again, are coupled in practice regardless of what the architecture diagram says. Files that rarely change together belong in different modules.

Adam Tornhill’s book ‘Software Design X-Rays’ is the definitive reference for the technique, and tools like CodeScene and the open-source code-maat can produce the analysis on a real repository in minutes. The output is a heat map that often disagrees with the official module structure.

This is a powerful sanity check on a design produced by Event Storming or capability mapping. If the design says two modules are independent and the git history says they keep changing together, one of three things is true. The design is wrong, the team is changing across boundaries it should not, or the modules secretly share a concept that should be promoted to its own module.

Combining the techniques

Few teams use only one technique. A workable approach for a system in flight starts with a half-day big-picture Event Storming to surface the domain language. A capability map, drawn the same week, checks the result against the long-term structure of the business. Value stream mapping, applied to the existing flows, says where teams are already doing the work. Change-coupling analysis on the current repository says where the code is actually coupled today.

Where the four agree, the boundary is well-evidenced. Where they disagree, the disagreement is the most useful artefact. It points at a specific question the team has not yet answered, and answering it sharpens the design.

Doing all five techniques on every system is overkill. Two or three, applied with care, are usually enough to surface the boundaries that matter. The point is not to perform the techniques but to triangulate.

None of these techniques produces a final answer. The first version of the modules will be partly wrong. The second version will be less wrong. A modular monolith earns its name only when the team revisits the boundaries every six months, with the lenses and the same discovery techniques, and treats module redesign as a normal part of the work.

How the lenses translate to C# project structure

The lenses produce a small set of practical conventions for a .NET modular monolith. They cover the per-module project layout, the rules for data ownership, and the way the modules are wired together at the edge of the system.

Per-module project layout

Each module lives in its own folder of the solution. The folder usually contains three or four projects.

A Contracts project holds the events and request types other modules are allowed to see. A Domain project holds the entities and value objects. The Application project holds the handlers and ports, and an Infrastructure project covers persistence and outbound integrations.

The Contracts project is the only one that other modules reference. Everything else inside the module uses the internal keyword. C# enforces this at compile time. A type marked internal in the Programming module simply cannot be named from Hospitality. The compiler is the boundary guard.

Storage and ownership

Storage follows the same rule. Each module owns its own tables in its own schema. The module’s repositories are the only code allowed to read or write those tables. No cross-module joins, even when one would be quicker. A cross-module join is a coupling the compiler cannot catch.

Retrofitting onto an existing single-database system is a migration rather than a starting point. Move the most stable module to its own schema first, repoint its queries through the module’s repositories, then repeat for the next module as boundaries solidify. Schema separation is an outcome of the work, not a precondition for it.

The composition root

The host project is the composition root. It references every module’s Application and Infrastructure project. One extension method per module, like AddProgrammingModule(), registers services. The host then wires the in-process event bus to the handlers. A new engineer should be able to read Program.cs and see the system’s shape on one screen.

A working composition root is short, even for a system with five modules. The festival modular monolith host looks something like this.

// Festival.Host/Program.cs

var builder = WebApplication.CreateBuilder(args);

// Each module owns its own registration extension method.

builder.Services.AddProgrammingModule(builder.Configuration);

builder.Services.AddTicketingModule(builder.Configuration);

builder.Services.AddHospitalityModule(builder.Configuration);

builder.Services.AddWorkforceModule(builder.Configuration);

builder.Services.AddFinanceModule(builder.Configuration);

// One in-process event bus wires the cross-module handlers.

builder.Services.AddInProcessEventBus();

var app = builder.Build();

// Each module also exposes its HTTP endpoints in its own file.

app.MapProgrammingEndpoints();

app.MapTicketingEndpoints();

app.MapHospitalityEndpoints();

app.MapWorkforceEndpoints();

app.MapFinanceEndpoints();

app.Run();Every name on this page is a module. Adding a new module to the modular monolith means one line in the registration block, one line in the endpoint mapping block, and a new project reference. Removing one is the reverse, with a clean grep target.

A worked example from a festival management platform

The example is a platform for running a multi-day music festival. A website sells tickets. One spreadsheet tracks artist bookings.

Another spreadsheet tracks rider commitments. A volunteer coordinator runs an Airtable. The festival’s bookkeeper updates an accounting package by hand.

Now the team wants to fold all of this into one application, without committing to a microservices platform on day one. A modular monolith is the right shape for the job.

Discovering the five modules

Five modules emerge from a half-day of work with the people who run the festival. A morning of big-picture Event Storming with the production manager, the box office lead, the volunteer coordinator and the bookkeeper produces a wall of events: artist confirmed, set time changed, ticket sold, ticket scanned, rider received, accommodation booked, volunteer signed up, settlement paid.

Clusters form quickly. Artist and stage events live together on the wall. Ticket events form their own cluster. Rider, accommodation and transport events form a third.

A short capability mapping exercise on the same wall confirms the shape. Programming, ticketing, hospitality, workforce and finance are the long-lived business capabilities. The team decides to keep one module per capability.

A pass over a year of the festival’s existing spreadsheets and ad hoc tools acts as the change-coupling analysis. Spreadsheets that consistently get edited together turn out to sit inside the same capability. Those that rarely get edited together sit in different capabilities. The boundaries hold.

The five modules and their bounded contexts

Programming holds artists, stages and set times. Ticketing holds sales, allocations and gate validation.

Hospitality holds artist riders, accommodation and ground transport. Workforce holds volunteers, paid staff and shift schedules. Finance holds settlements with artists, supplier payments and revenue recognition.

Each module is a bounded context, in the sense Eric Evans gave the term in his book on Domain-Driven Design. The word ‘artist’ means slightly different things in each one. Programming sees an artist as a booking with a stage and a slot. Hospitality sees the same artist as a party of people with travel, accommodation and dietary needs. Finance sees them as a payee with a contract and a settlement.

The naming is deliberate. Trying to share one canonical ‘Artist’ type across modules would force a single team to coordinate every change.

Drawing boundaries from the domain rather than the layers

A layered codebase splits ‘controllers’, ‘services’ and ‘repositories’ at the top level. All the festival code then drops into a single bag inside each layer. A modular codebase does the opposite. Its top level is the business capability. Inside each capability the team can keep whatever internal layering serves it.

The cohesion lens does the work. Things inside Programming change when the festival’s lineup changes. Pricing-model updates pull on Ticketing instead, and settlement rules pull on Finance. Each pressure stays inside its module, so a change to set times does not pull a Finance test red.

How modules talk to each other

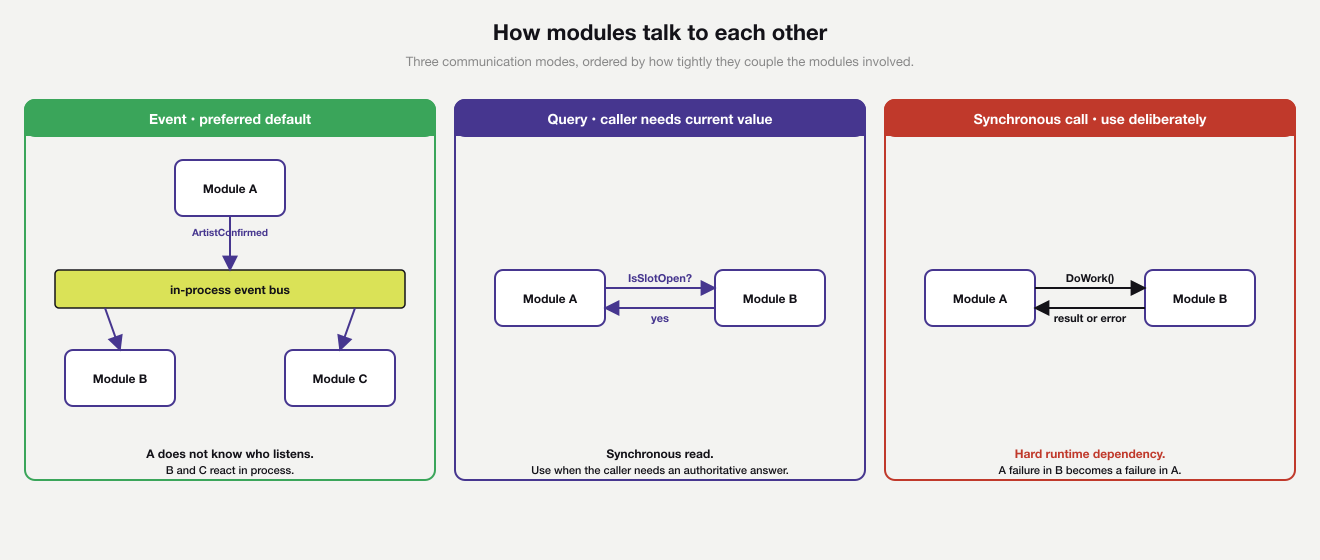

Modules need to share information without sharing internals. Three patterns cover almost every case. A public event lets one module announce that something has happened; any module that cares can react. Public queries let one module ask another a small, well-defined question and get a small, well-defined answer. Synchronous calls have one module ask another to do work and wait for the result.

The diagram orders the three modes from loosest coupling on the left to tightest on the right. Events are the loosest, because the publishing module does not know who listens. Queries sit in the middle, because both modules are involved at the same time but only one is asking. Synchronous calls sit on the right because both modules succeed together or fail together.

Events are usually the better default. They keep the publishing module ignorant of who is listening. Queries are the right tool when the caller needs an authoritative current value, like asking Programming whether a particular slot is still open.

Synchronous calls between modules are not forbidden, but they create a hard runtime dependency. A failure in the called module becomes a failure in the calling module. Use them deliberately, not by reflex.

A code excerpt from the Programming and Hospitality modules

Confirming an artist booking is a real cross-module flow. Programming owns the act of confirming. Hospitality needs to know about the confirmation so it can start scheduling the rider. The two modules communicate through a single contract event, which lives in Festival.Programming.Contracts and is referenced by Hospitality.

// Festival.Programming.Contracts/Events/ArtistConfirmed.cs

namespace Festival.Programming.Contracts.Events;

public sealed record ArtistConfirmed(

Guid ArtistId,

string ArtistName,

DateOnly PerformanceDate,

string StageName);Inside Programming, the booking aggregate enforces the rules of confirmation, and the application service publishes the event after the aggregate has been saved. The event is an after-the-fact statement, not a request for action.

// Festival.Programming.Application/Bookings/ConfirmBookingHandler.cs

namespace Festival.Programming.Application.Bookings;

internal sealed class ConfirmBookingHandler

{

private readonly IBookingRepository bookings;

private readonly IDomainEventBus events;

public ConfirmBookingHandler(

IBookingRepository bookings,

IDomainEventBus events)

{

this.bookings = bookings;

this.events = events;

}

public async Task<Result> HandleAsync(ConfirmBooking command)

{

var booking = await bookings.LoadAsync(command.BookingId);

if (booking is null)

{

return Result.NotFound("Booking not found.");

}

var confirmation = booking.Confirm(command.ConfirmedBy);

if (confirmation.IsFailure)

{

return confirmation;

}

await bookings.SaveAsync(booking);

await events.PublishAsync(new ArtistConfirmed(

booking.ArtistId.Value,

booking.ArtistName.Value,

booking.PerformanceDate,

booking.Stage.Name));

return Result.Success();

}

}Hospitality reacts in process. It does not call back into Programming. It already has every fact it needs, carried in the event payload.

// Festival.Hospitality.Application/Riders/ScheduleRiderOnArtistConfirmed.cs

namespace Festival.Hospitality.Application.Riders;

using Festival.Programming.Contracts.Events;

internal sealed class ScheduleRiderOnArtistConfirmed

: IDomainEventHandler<ArtistConfirmed>

{

private readonly IRiderScheduler scheduler;

public ScheduleRiderOnArtistConfirmed(IRiderScheduler scheduler)

{

this.scheduler = scheduler;

}

public Task HandleAsync(ArtistConfirmed @event)

{

return scheduler.ScheduleAsync(

@event.ArtistId,

@event.ArtistName,

@event.PerformanceDate);

}

}Three things are worth noticing. The handler is internal, so it cannot be referenced from any other module. Its event payload is a record, immutable and small. Workforce can later subscribe to ArtistConfirmed to start planning stewards without any change to Programming or Hospitality.

One caveat is worth surfacing. The handler shown here saves the booking and then publishes the event in two separate steps. If the process crashes between them, the event is lost while the database still thinks the booking is confirmed.

A production-grade modular monolith uses the outbox pattern for this. The event is written to an outbox table inside the same transaction as the booking, and a background dispatcher publishes it. The aggregate is saved and the event is delivered together, or neither is.

Each handler is a small unit, easy to specify with a unit test and a fake scheduler. That discipline matters more once the system grows. A practice like test-driven development works particularly well in modular code, because each module owns its own test surface.

Evolving the contracts over time

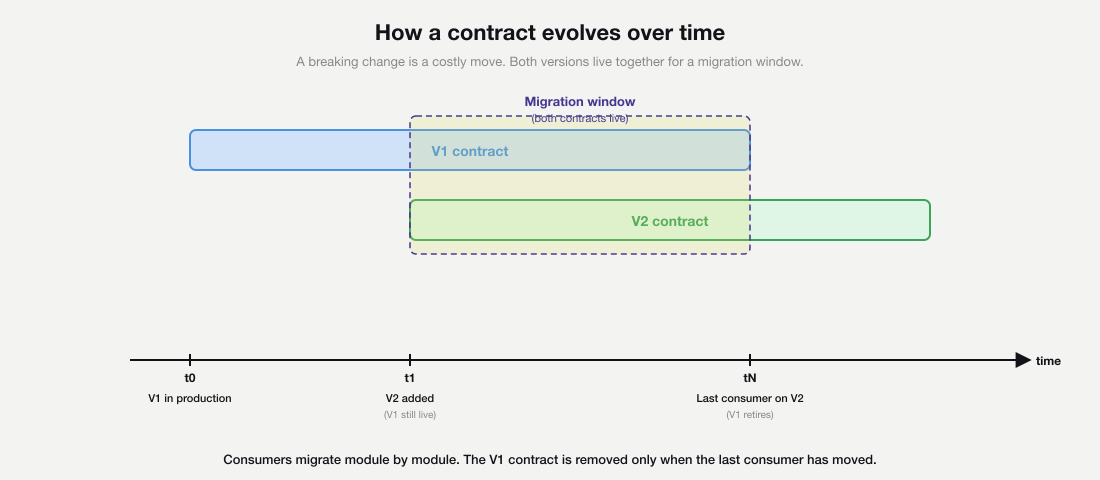

Contracts change. New events get added. Old events grow new fields. Sometimes an event splits in two as the domain refines. The discipline that keeps a modular monolith healthy is to make contract changes additive by default.

An additive change adds a new event or a new optional field. Existing handlers carry on working without changes. The publishing module rolls out the new contract first, and consumers adopt it on their own schedule.

A breaking change is harder. The contract gets versioned, with both versions live in the Contracts project for a period. Consumers move from V1 to V2 module by module, and the V1 contract is removed only when the last consumer has migrated. Treat a breaking change as the costliest move in the playbook, because every other team has to find time for the migration.

The diagram traces this on a timeline. V1 ships at t0. V2 ships at t1, when the breaking change is needed. The migration window between t1 and tN is the cost of the change, paid in coordination across teams. Only at tN, once the last consumer has moved, does V1 retire.

Modular monoliths and stream-aligned teams

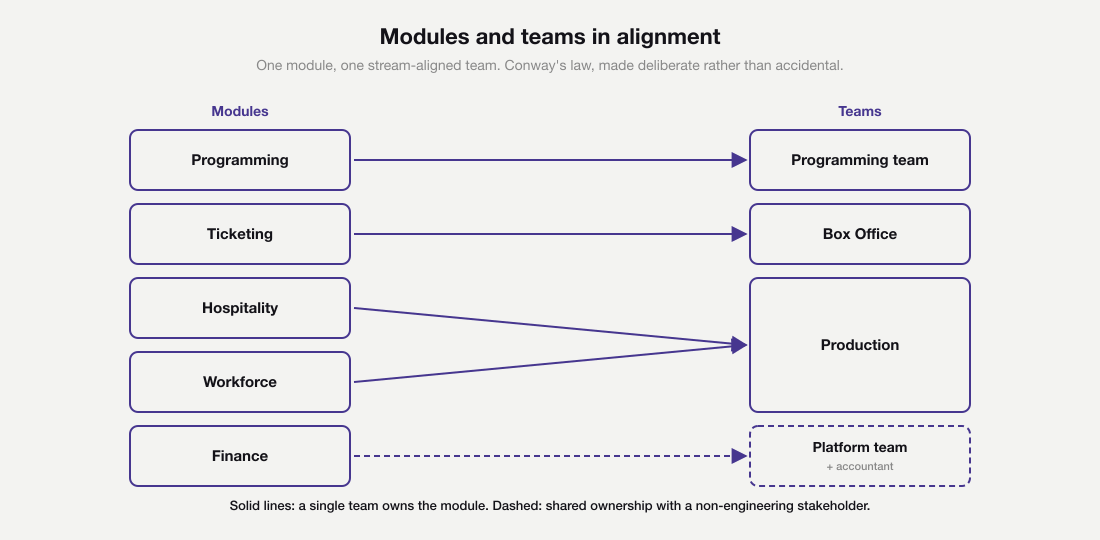

Code structure and team structure are not independent variables. Team Topologies, the work of Matthew Skelton and Manuel Pais, names four team types. The most common is the stream-aligned team, which owns a flow of value end to end. A modular monolith and stream-aligned teams reinforce each other when each module aligns to a value stream the team can name.

The festival’s value streams

Four value streams run through the festival modular monolith, each with a different customer. Naming them is the first step in mapping modules to teams.

Ticket purchase serves the festival-goer. It runs from the moment they discover the lineup to the moment they scan a ticket at the gate. Ticketing covers the digital portion of this stream end to end, with one upstream dependency on Programming for the lineup itself.

Artist journey serves the artist. It runs from first outreach through booking, on-site hospitality, performance and final settlement. Three modules carry the stream. Programming covers the booking, Hospitality covers the on-site logistics and Finance covers the settlement.

Festival operations serves the production manager and the volunteer coordinator on the day. It runs from volunteer recruitment through shift planning to on-the-day delivery. Workforce covers it.

Supplier settlement serves the festival as a business and its suppliers. It runs from cost calculation through payment to revenue recognition. Finance covers it.

From streams to teams to modules

The team mapping follows the value streams. The Programming team owns the Programming module, because their stream begins with artist booking. Box Office owns Ticketing, because their stream is the festival-goer journey from website to gate.

Production owns Hospitality and Workforce together, because their value streams cross on the day. The artist’s rider has to align with the volunteer’s shift, and both have to line up with the schedule. One team thinking across two modules removes a hand-off that would otherwise show up at the worst possible moment.

Finance is shared with the festival’s accountant and is owned by a small platform-style team. The settlement stream serves every other stream rather than a single end-user, so the team that owns it sits closer to a platform team than a stream-aligned one.

Team Topologies also names platform, enabling and complicated-subsystem teams. Some modules sit closer to those, like Finance here, because every other module depends on them. Naming the team type sharpens the ownership conversation.

Each team can plan, change, test and deploy its own module without checking with other teams. In a healthy modular monolith, the only contract surface across team boundaries is the one in the Contracts project.

Conway’s law and deliberate design

This is also where Conway’s law shows its hand. Picture an orchestra. Whatever you write on the page, the music that comes out is shaped by which instruments are in the room. The shape of the team graph and the shape of the module graph end up matching, whether the design intends it or not.

Designing the modules and the teams together makes the match a choice. Designing them apart, especially apart at different points in time, produces a hidden monolith dressed as modular code.

The cost of getting this wrong is not theoretical. Martin Fowler’s ‘MonolithFirst’ piece makes a related point about service boundaries. The same point applies to module boundaries. A modular monolith gives a team a cheaper place to learn the right shape.

Patterns that quietly erode modularity

Healthy modules degrade. The cause is small individual changes, each of them locally reasonable. Four patterns recur in every long-lived modular monolith. Catching them on a pull request is worth more than any number of architecture diagrams.

The growing shared kernel

A ‘Common’ or ‘Shared’ project is created for genuinely cross-cutting things, like a result type or a clock abstraction. Over months, modules start putting domain types into it for convenience. A shared ‘OrderStatus’ enum appears, then a shared ‘Customer’ record.

That shared project becomes a hidden trunk every module depends on. The fix is to keep the shared kernel ruthlessly thin and to push anything domain-shaped back into a module.

The cross-module SQL join

A reporting feature is needed quickly. Someone writes a query that joins two modules’ tables directly, bypassing the public contracts. The query works.

Six months later, a schema change in one module silently breaks a report owned by another team. Treat any cross-module data need as a first-class contract. Either the source module exposes a query, or the consuming module owns its own read model fed by events.

The leaking enum

A module exposes a public type that includes an enum from its internal domain. Other modules start switching on the enum. When the source module wants to add a new value, it cannot, because every consuming module has an exhaustive switch.

Keeping enums internal solves this. Expose only the values other modules genuinely need.

The chatty event chain

A publishes an event. B reacts and publishes its own event. C reacts to that and publishes a third. Business logic ends up encoded across three handlers in three modules, with no one place to read what the workflow does.

Put orchestration in a named place. A small workflow inside the module that owns the outcome reads better than a chain of cascading reactions.

When a modular monolith is the wrong choice

The pattern has limits. A module that needs very different scaling from the rest of the system is a candidate for a separate process. Sub-millisecond pricing engines do not belong in the same process as a daily settlement job.

Strict rules on data separation are another reason to extract a service from a modular monolith. Some workloads have to leave the building because their data has to be kept apart.

Independent release cadences are a weaker reason than they look. The desire to deploy one module without the others is usually a workflow problem, not an architecture problem. A well-tested modular monolith deploys safely many times a day.

A modular monolith also makes a later service split much cheaper. The contract surface and the data ownership are already separated. Shopify’s experience report on its modular monolith documents this on one of the largest production codebases in the industry.

What to do with a growing C# codebase this quarter

If a single .NET solution has crossed the point where teams routinely conflict, a modular monolith is the most likely next step. The cheapest opening move is the four lenses.

Apply the lenses to the existing code on a Friday afternoon. Pick three candidate boundaries. Walk them through modularity, coupling, cohesion and composition. The boundary that survives all four is the one to invest in first.

Pair the lenses with one of the discovery techniques. Half a day of Event Storming with the people who run the domain, or a capability map of the business, is enough to test whether the boundary you chose matches what the work actually looks like outside the codebase.

The next step is not a new repo or a new platform. It is a Contracts project for the chosen module. Everything else inside the module is marked internal. A single in-process event bus carries the first cross-module fact. From there, the modular monolith grows one module at a time, each one earning its place.

The four erosion patterns are the discipline once the second module joins. They surface in pull requests rather than in design reviews. Catching them there is what keeps the architecture from drifting back to the tangled monolith it replaced.